Based on its experience in text analysis, OrphAnalytics has developed a unique detection tool of ChatGPT texts: this multilingual tool exploits the ChatGPT specifications to detect automatic text production, without the need to know the language models used. This detector, developed without any conflict of interest, ensures the independence of the detection strategy, guaranteeing the respect of good writing practices.

The ChatGPT revolution

At the end of 2022, the public discovered the use of OpenAI's chatbox ChatGPT. Access to this conversational agent allowed for the testing of automated content writing that, when cleverly presented, makes it appear that an artificial intelligence (AI) is participating in a conversation. Essentially, ChatGPT's utterances are generated from a user's question by selecting the most likely terms observed in short strings of words from training texts. An additional AI learning step is added, where human speakers discard nonsensical or contentious responses from the AI.

By writing using probable semantics, ChatGPT understands neither the meaning of the training texts nor the meaning of the message it produces. Its copywriting strategy, which mimics the consensus style of training texts, is not calibrated to produce original relevant responses: ChatGPT's intelligence only serves to produce credible style text whose often unsourced search results should be taken with caution, according to a recent interview with OpenAI CEO Sam Altman: "ChatGPT is incredibly limited," Altman acknowledged in a thread he posted on Twitter in December. "But good enough at some things to create a false sense of grandeur. It's a mistake to trust it with anything important right now."

The consequences of ChatGPT

If ChatGPT meets some legitimate needs of a user such as sketching a draft or summarizing a text, this AI can be used in a fraudulent context: e.g. producing a number of fake news, writing anonymous criminal letters for a cyber-attack for example, answering for a candidate to an academic exam or writing a certifying document in his place.

Fraudulent uses of ChatGPT therefore require the ability to detect automatic texting. Currently, only automatic texting providers propose detectors for texts produced by ChatGPT. A conflict of interest may arise for these AI text providers: their primary interest is to make their AI texts undetectable to any AI redaction detector. They target a massive use of their chatbox, whose produced texts judged redundant or iirrelevant are systematically downgraded by a search engine like Google's.

Two strategies to detect ChatGPT texts

1. OrphAnalytics' author attribution strategy capable of detecting ChatGPT texts

OrphAnalytics' (OA) approach addresses this conflict of interest: while we are not a stakeholder in the production of AI texts, OA's expertise in text analytics allows us to calibrate the detection of AI-produced texts. Since OA's inception in 2014, its algorithms have effectively detected whether a text was produced by its signer or by another person. OA's rare author attribution capability served investigators in the Gregory Affair as well as the New York Times, which used OA's results in an article to identify who in a group of suspects may have produced the QAnon texts, i.e., the body of messages, terrorist according to the FBI.

If a candidate fraudulently produces for academic certification a text written by another person, the difference in style detected by OA will be similar to that measured from a text written by ChatGPT, regardless of the language models used.

2. OrphAnalytics' new ChatGPT detection, independent of language models

How to detect a text written by ChatGPT without its training corpus? By following the specifications of ChatGPT which only tries to produce a text similar to the training texts without understanding the information carried by the training texts or those that inspire or that the AI creates.

Concretely, to write a text similar to the training texts, ChatGPT chooses the most probable neighboring words according to the training texts, while a writer writes his text by organizing his arguments without constraint of choice of words. A human text will therefore be written with a greater freedom of word choice than a ChatGPT text.

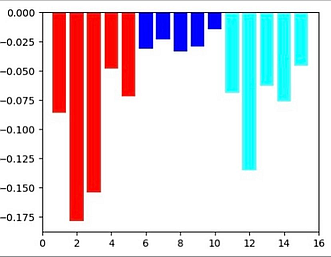

Obtained by Machine Learning, the figure below illustrates how to detect ChatGPT texts. The length of the bars is proportional to the vocabulary constraints: in the center in blue, 5 economic articles from a newspaper columnist, on the left in red, 5 economic articles written by ChatGPT, and on the right in cyan, 5 historical articles produced by ChatGPT. In this example built on articles of about 3000 signs (a little less than a full page of MS-Word text corresponding to about 500 tokens), the 5 texts of the columnist are of a freer semantic choice (short blue bars) than the texts of ChatGPT (longer red and cyan bars). The greater restriction of semantic choice in ChatGPT is of the same order, whether the theme is economic or historical. The example thus illustrates the significant difference in the degree of freedom in the choice of words: great freedom for a writer, constrained freedom for ChatGPT.

OA's ChatGPT detection technique works on texts with a minimum target size of half a page (about 250 tokens). While our untrained approach requires more tokens (any unit of isolatable words in a text) than that currently followed by ChatGPT text detectors using the language model required for GPT text, OA's technique is much faster than those of other ChatGPT robot detectors. Moreover, the OA technique is immediately applicable to other languages for mass text analysis.

Developed from a stylometric know-how independent from the development of conversational agents, the availability of OrphAnalytics thus resolutely ensures the respect of good writing practices.

With our fast algorithms capable of analyzing mass documents, posted on the Web for example, we are open to any B2B collaboration that would profitably use our know-how while respecting our values.